SK hynix advances AI memory with next-generation high-capacity modules

By Cygnus | 20 Apr 2026

Summary

- Process development: SK hynix continues to advance its next-generation DRAM technology, including work on the 1c (sixth-generation 10nm-class) process.

- AI memory demand: High-capacity, high-bandwidth memory modules are increasingly critical for AI and data center workloads.

- Ecosystem alignment: The company is expanding collaborations with major AI chipmakers such as Nvidia.

SEOUL, April 20, 2026 — SK hynix is strengthening its position in the fast-growing AI memory market, focusing on next-generation DRAM technologies and high-capacity modules designed for data center and artificial intelligence workloads.

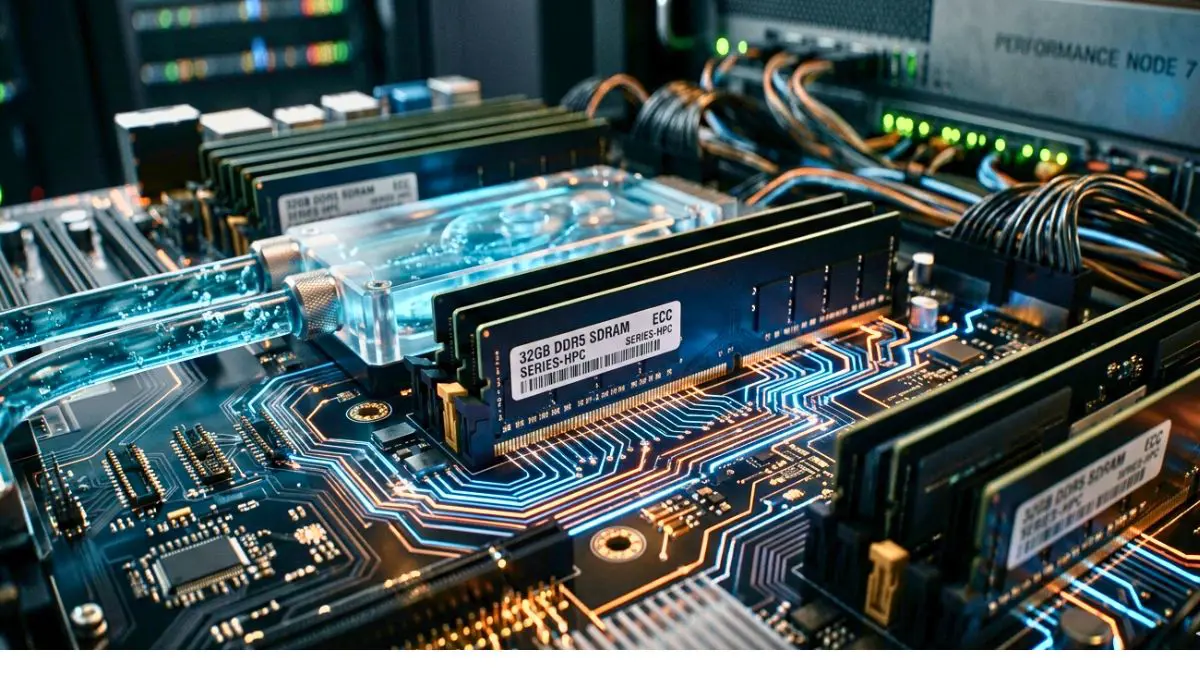

The company has been developing its 1c DRAM node, part of the sixth generation of 10-nanometer-class memory processes, aimed at improving performance and power efficiency compared to earlier nodes.

Focus on next-generation DRAM

Advancements in DRAM technology are increasingly tied to AI infrastructure, where memory bandwidth and efficiency play a crucial role.

While SK hynix has highlighted improvements in speed and energy efficiency with newer nodes, specific claims such as 9.6 Gbps speeds, 75% power reduction, or detailed module-level performance figures are not officially confirmed.

No confirmed mass production of SOCAMM2

Claims regarding mass production of a 192GB SOCAMM2 module and detailed specifications of such a product are not supported by official public disclosures.

SOCAMM (Small Outline Compression Attached Memory Module) is an emerging form factor discussed within the industry, but its commercialization timeline and specifications remain limited in publicly available information.

Nvidia platform linkage remains unconfirmed

While Nvidia is expected to continue evolving its AI platforms, direct confirmation linking specific SK hynix SOCAMM2 modules to a “Vera Rubin” platform is not publicly established.

However, SK hynix is a known supplier of advanced memory, including High Bandwidth Memory (HBM), for AI accelerators.

Growing importance of energy-efficient memory

The push toward more efficient memory solutions reflects broader trends in AI infrastructure, where power consumption and thermal management are becoming key constraints.

High-capacity and modular memory designs are expected to play a larger role as data centers scale to support increasingly complex AI models.

Why this matters

- AI infrastructure demand: Memory performance is a critical bottleneck in scaling AI systems

- Energy efficiency: Data centers are prioritizing lower power consumption to manage costs

- Technology competition: SK hynix competes with global players like Samsung and Micron in advanced memory

- Ecosystem role: Close alignment with AI chipmakers is essential for long-term growth

FAQs

Q1. What is the 1c DRAM process?

It is a sixth-generation 10nm-class memory manufacturing technology aimed at improving speed and efficiency.

Q2. What is SOCAMM?

It is a proposed modular memory form factor designed to improve flexibility and serviceability in systems, though still emerging.

Q3. Is this replacing HBM?

No. HBM remains critical for high-performance AI processing, while other memory types serve complementary roles.